Federated Learning on Edge Devices: Training Local LLMs Privately

Key Takeaways

- Federated learning enables privacy-preserving LLM training on edge devices.

- Offline-first React Native apps benefit from local LLM capabilities without exposing raw data.

- This approach enhances data security and user experience by keeping data on the device.

- Consider federated learning to unlock value from on-device data securely.

The Data Privacy Conundrum at the Edge

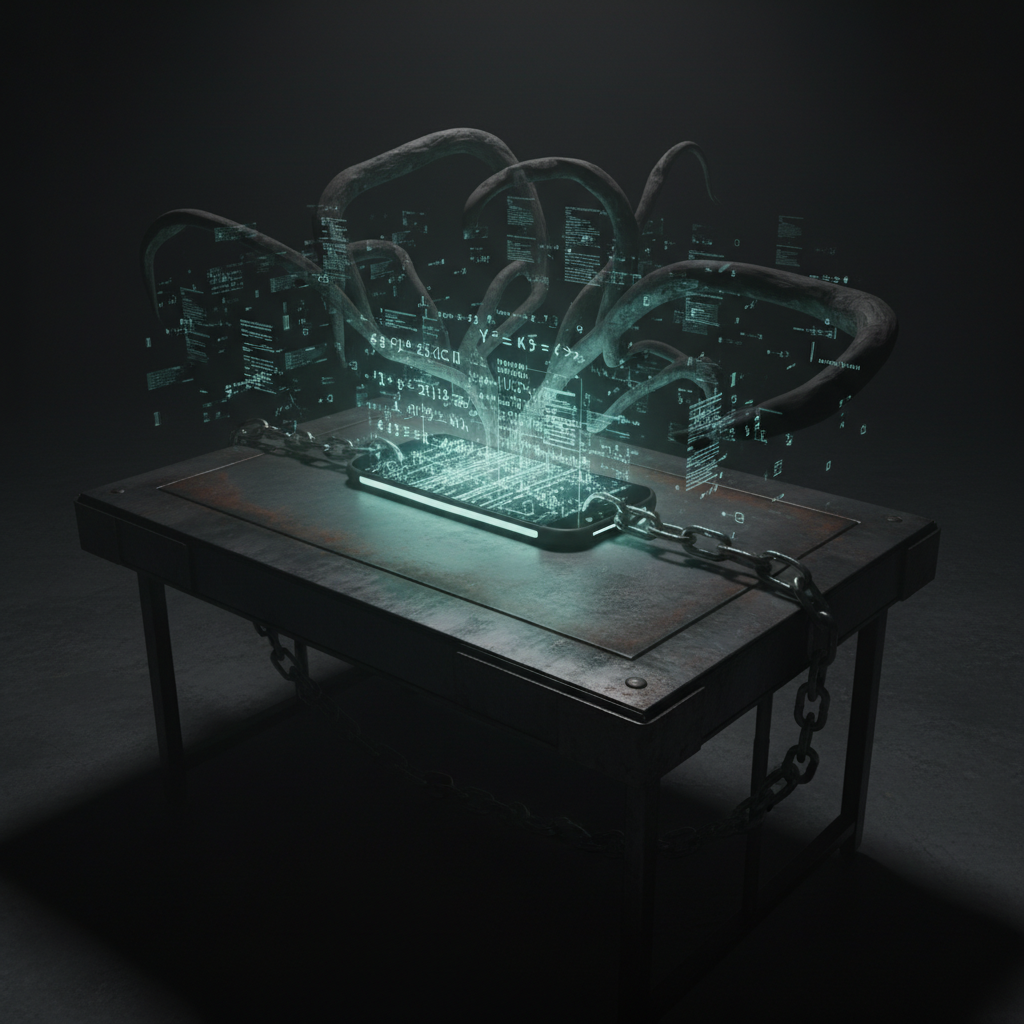

Imagine your data as a delicate ecosystem, teeming with valuable insights. Now, picture trying to extract those insights without disturbing the delicate balance of that ecosystem. That’s the challenge we face when training large language models (LLMs) on edge devices, especially in offline-first applications. The ideal scenario is leveraging the user’s data to improve models locally, without ever transmitting sensitive information to a central server. The core issue? Data privacy. The solution? Federated learning.

The logistics, construction, and field service sectors are sitting on a gold mine of untapped data. From equipment usage patterns to customer interaction logs, this data holds the key to significant optimisation. However, much of this data lives on mobile devices in remote locations, often with limited or intermittent connectivity. Traditional cloud-based machine learning approaches are simply not viable, both from a connectivity and a data privacy perspective. This is where federated learning steps in as a powerful alternative.

Federated Learning: A Collaborative Approach to Model Training

Federated learning is a distributed machine learning technique that allows models to be trained across a multitude of decentralized edge devices. Think of it as a team of specialists working together, each contributing their expertise without revealing their individual findings. Each device trains a local model on its own data, and then only the model updates are sent to a central server, where they are aggregated to create a global model. The raw data stays put, safely residing on the user’s device.

In an offline-first React Native application, this means that the LLM can be trained locally on the user’s device, continuously improving its performance without requiring a constant internet connection. This is particularly valuable in industries like logistics and construction, where workers often operate in areas with poor or no network coverage. The application can still learn and adapt to the user’s specific needs, even when offline, providing a seamless and personalised experience. It’s like having a local expert available at all times, even when cut off from the main office.

The Architecture of Privacy: How It Works

The beauty of federated learning lies in its elegant architecture. Each edge device (e.g., a mobile phone running your React Native app) becomes a mini-training ground for the LLM. Here’s how it typically unfolds:

- Local Training: The device uses its locally stored data to train a copy of the global model.

- Update Submission: Only the updated model parameters (e.g., weights and biases) are sent to a central server. The raw data remains on the device.

- Aggregation: The central server aggregates these updates from multiple devices to create an improved global model.

- Model Distribution: The updated global model is then distributed back to the edge devices for further local training.

React Native and Federated Learning: A Perfect Match

React Native’s cross-platform capabilities and offline-first design make it an ideal platform for implementing federated learning. The ability to build a single application that can run on both iOS and Android devices significantly simplifies the development and deployment process. Furthermore, React Native’s support for background tasks and local storage allows for efficient model training and storage on the device.

Data Security: Beyond Privacy

Federated learning does more than just protect privacy. It also enhances data security by reducing the risk of data breaches and cyberattacks. With data residing solely on the user’s device, there is no single point of failure that can be exploited. This is especially important in industries where data security is paramount, such as healthcare and finance.

However, it is important to acknowledge that federated learning is not a silver bullet. Model updates themselves can potentially leak information about the underlying data. Techniques such as differential privacy can be incorporated to add noise to the model updates, further obfuscating the data and enhancing privacy. Think of it as adding a layer of encryption to the already secure communication channel.

Dendro Logic Perspective

At Dendro Logic, we see federated learning as a key enabler for unlocking the value of data at the edge. By bringing the power of LLMs to offline-first applications while preserving data privacy, we can help businesses in logistics, construction, and field services gain a competitive advantage. The API-First design is like the nervous system for companies now, and by implementing federated learning, you are allowing the peripheral systems to think on their own.

Ready to explore how federated learning can transform your data architecture and unlock new possibilities for your business? Contact us today for an audit of your logic problems.